Best Data Management Solutions & MDM Tools in 2026

Contents

Contents

Data management and master data management (MDM) have changed a lot by 2026. Enterprises now deal with much larger volumes of data, spread across cloud platforms, SaaS tools, legacy systems, and real-time pipelines. Growing governance pressure and more advanced analytics use cases have made coordination, consistency, and accountability harder to maintain with older centralized models.

A strong data warehouse foundation is often enough to support reporting and analytics for smaller organizations. In those cases, data warehouse services may be the most practical starting point. Enterprises usually face additional complexities, such as multiple business units, shared master records, stricter governance requirements, and cross-system dependencies that go beyond warehousing alone.

In this article, we look at how modern data architectures address these challenges as part of a broader data management strategy. The focus is on roles, trade-offs, and architectural fit, not a simple MDM tools comparison. Understanding how data management, MDM, governance, and integration work together is now more important than choosing individual tools.

What Data Management and MDM Mean in 2026

In 2026, data management refers to the coordinated practices that control how data is created, governed, shared, and used across an organization. It includes quality controls, ownership models, integration logic, and access policies. Data now lives across cloud platforms, SaaS tools, and operational systems at the same time. This distribution has changed how organizations define control and trust.

As data environments have changed, MDM has had to change with them. Instead of serving as a single central store, it now works to keep identities and relationships aligned across many systems. Customer, product, and supplier data are usually created and updated in several places at once. MDM brings order to that spread without forcing full centralization.

Older definitions assumed stable schemas, batch updates, and limited system sprawl. Those assumptions no longer hold in environments shaped by cloud migration, API-driven tools, and near-real-time analytics. Data changes faster than manual governance processes can manage.

Several core capabilities intersect within modern data architectures:

- Data governance frameworks. These define ownership, stewardship, and access rights. They clarify who can create, approve, or modify data across systems.

- Data quality controls. These monitor accuracy, completeness, and consistency. Quality checks now operate continuously rather than through periodic cleanup efforts.

- Integration and orchestration layers. These connect operational platforms and analytical environments. They manage synchronization, transformation, and event-based data flows.

- Metadata and cataloging capabilities. These document lineage, definitions, and usage context. They provide visibility for analytics teams and AI systems.

In practice, these capabilities are tightly linked. Governance policies rely on quality metrics to remain enforceable. Quality monitoring depends on metadata to identify root causes. Integration workflows determine where authoritative data is created and consumed.

MDM sits at the center of these interactions. It supports shared identifiers, hierarchy management, and cross-domain relationships that analytics and AI models depend on. For many organizations, maintaining multiple authoritative sources is unavoidable. MDM provides structure without requiring a single system of record.

This shift has also changed how organizations look at data tools. Features matter less on their own, while fit within the existing data ecosystem matters much more. Data teams now assemble governance platforms, integration layers, catalogs, and MDM based on their data maturity and operating model. In that setup, enterprise data management tools act as building blocks within the architecture, not self-contained solutions.

How We Selected the Best Data Management & MDM Solutions

Our framework reflects how organizations evaluate best data management solutions in complex environments. The emphasis is on architectural alignment, scalability, and operational readiness. These factors influence sustainability more than short-term functionality. The following criteria guided the review:

- Enterprise relevance. Built for large organizations that operate across many data domains and business units. It works alongside operational systems, analytics platforms, and external data sources running at the same time.

- Scalability and performance. The platform handles growing data volumes, more users, and new integrations. It stays stable as usage grows across business unity and regions.

- Operational maturity. The technology demonstrates proven deployment models and long-term enterprise adoption. It supports production-scale use across core business systems.

- Interoperability and extensibility. Connects easily with data quality, integration, and metadata tools. New capabilities can be added without reworking the underlying architecture.

- Governance and control capabilities. Data teams define ownership, manage stewardship, and enforce policies through the platform. It also supports audits, access control, and compliance needs.

These criteria reflect common enterprise constraints. Most organizations operate mixed stacks and incremental modernization programs. Within this context, master data management software serves as an alignment layer rather than a standalone system.

Data Management Solutions & Best MDM Tools

Modern data architectures rely on multiple platforms working together. Data management and MDM tools usually fall into distinct functional layers. In this section, we group commonly used solutions by the role they play within enterprise environments.

MDM Core Platforms

These platforms focus on mastering core business entities such as customers, products, suppliers, and reference data. They manage identity resolution, hierarchies, relationships, and synchronization across systems. Organizations typically deploy them as the central alignment layer between operational and analytical environments.

- Informatica is typically used as a centralized data management platform within large enterprises. It supports MDM, data integration, quality management, and governance workflows across hybrid and multi-cloud environments. Organizations often adopt it when consistency and cross-system coordination are priorities.

- Reltio provides a cloud-native MDM platform designed for distributed data environments. It is commonly used to manage customer and product data across SaaS ecosystems. The platform fits architectures that emphasize real-time synchronization and API-driven integration.

- SAP Master Data Governance is typically deployed within SAP-centric enterprise environments. It manages master data consistency across ERP and operational systems. The platform aligns closely with organizations standardizing on SAP for core business processes.

- Oracle Enterprise Data Management helps govern and synchronize master and reference data across Oracle-based systems. It is commonly adopted by organizations operating Oracle ERP and financial platforms. The solution supports hierarchical and financial data management use cases.

- Ataccama combines data quality, MDM, and governance capabilities within a unified platform. It is commonly used to support trusted data initiatives across analytics and operational systems. The platform fits organizations seeking strong quality automation.

Governance & Metadata Layer

These platforms focus on visibility, accountability, and control, rather than on mastering data directly. They help manage ownership, policies, lineage, and business definitions. Most organizations connect them with MDM systems, analytics platforms, and integration tools to work together as part of a broader data setup.

- Collibra is primarily used as a data governance and data intelligence layer. It supports stewardship workflows, policy management, and metadata visibility. Organizations often pair it with MDM platforms rather than using it as a replacement.

- Microsoft Purview is used for data governance, cataloging, and lineage inside Microsoft environments. Data teams rely on it to understand how data moves across Azure services and connected SaaS tools.

- IBM InfoSphere is often part of enterprise data stacks that require strong governance and lifecycle management. It is commonly adopted in regulated industries where compliance rules are strict and hard to manage. The platform is closely integrated with IBM’s analytics and data services, which makes it a natural fit for organizations already using that ecosystem.

Integration & Data Flow Layer

These tools handle how data moves, changes shape, and gets checked as it travels between systems. They do not manage master records themselves, but they play a key role in supplying MDM platforms and pushing trusted data to downstream systems.

- Talend is widely used for data integration and quality management. It is not an MDM platform on its own, but it plays an important supporting role. Data teams use it to feed master data, keep systems in sync, and validate data as it moves through cloud data warehouses and analytics pipelines.

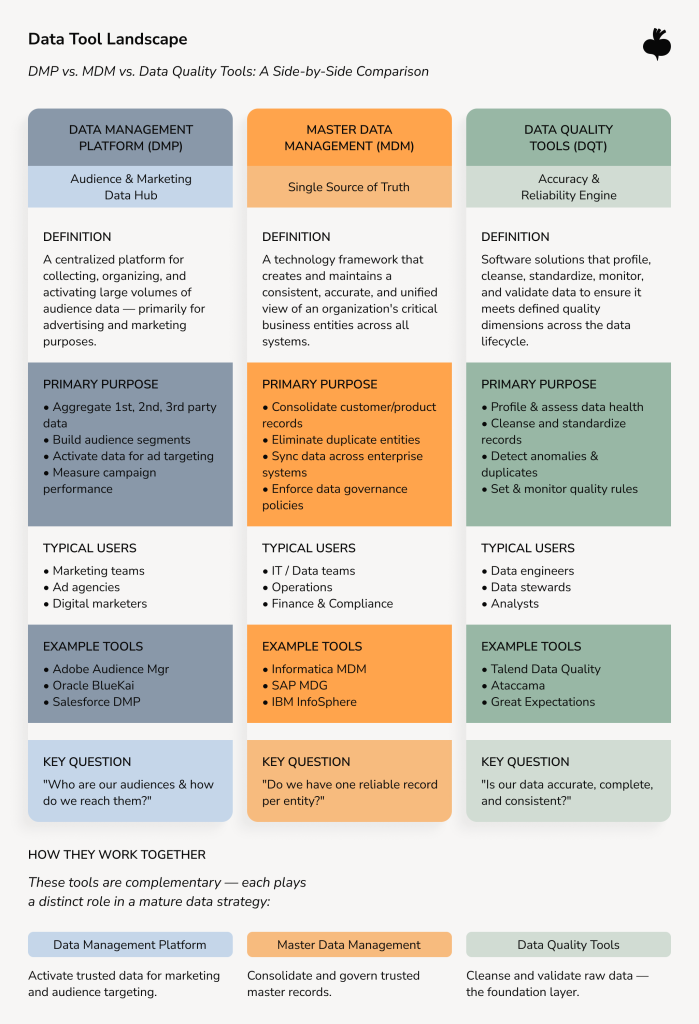

Data Management Platforms vs MDM vs Data Quality Tools

Enterprise data management tools assist in keeping data in motion, organized, and under control. They link operational applications, analytics platforms, and cloud services into a more connected data environment. They provide orchestration and visibility, but they do not resolve entity identity by themselves.

MDM platforms align core business entities such as customers, products, and suppliers. They handle deduplication, identity resolution, and hierarchies across systems. This role sits at the center of many best master data management solutions used in large enterprises.

Data quality tools validate, standardize, and monitor data as it moves through pipelines and applications. They detect inconsistencies and errors early. They often support best data quality tools programs, but they cannot define ownership or authority alone.

Some overlap between tools is normal, but problems appear when each layer operates on its own. MDM cannot correct poor source data without proper quality controls in place. Data quality tools can highlight issues, but they cannot decide which system should be trusted.

The Future of Data Management

In 2026, enterprise data strategies are moving away from tight central control and toward models that can scale more easily. Organizations now handle far more data, faster data flows, and a growing number of users across cloud platforms.

That pressure is also visible in the market itself: the global enterprise data management market reached about USD 122.84 billion in 2025 and is expected to grow to nearly USD 226 billion by 2031.

The following trends define the future of data management.

Data mesh adoption expands

Data mesh promotes domain-based ownership instead of centralized data teams. Business units manage their own data products while shared governance standards remain in place. This model improves speed and scalability but increases the need for strong coordination and metadata visibility.

Data fabric connects distributed systems

Data fabric focuses on connecting data and making it easy to access, especially in hybrid and multi-cloud environments. It does this without pulling data into a single physical store. Data and analytics teams use data fabric to make data easier to find and work with across systems.

Governance-by-design replaces manual enforcement

Governance is moving upstream into architecture and pipelines. Policies are applied automatically through metadata, access rules, and workflow controls. This approach reduces reliance on documentation and manual review.

AI-driven data quality gains traction

Machine learning now plays a bigger role in spotting anomalies, duplicate records, and schema changes. This helps to keep an eye on data quality across large and complex environments. Automation alone is not enough, though.

Taken together, these shifts point to a broader change in how enterprises manage data. Scale comes from aligning architecture, governance, and domain ownership. Centralized tooling on its own rarely delivers that outcome.

When Enterprises Need Custom Development Alongside MDM Tools

Off-the-shelf MDM platforms provide a strong foundation, but they rarely cover the full scope of enterprise data environments. Most organizations operate across legacy systems, cloud platforms, and SaaS tools that were not built to work together. As a result, MDM implementations often require an engineering and consulting partner to help enterprises design, integrate, and extend data platforms for production use.

Custom integration is a common requirement. Enterprises frequently need tailored APIs, connectors, or event-driven pipelines to move data between source systems, MDM hubs, and analytics platforms.

Data quality and validation often extend beyond built-in capabilities. Many organizations implement custom QA rules, automated testing, and domain-specific checks as part of broader data quality assurance efforts tied to internal processes. For example, our development team often helps companies translate business rules into automated validation logic, integrate quality checks into data pipelines, and scale testing as data volumes and use cases grow.

Analytics needs introduce a new kind of complexity. MDM establishes trusted entities, but it does not handle analytical models or reporting on its own. To bridge that gap, enterprises usually rely on transformation layers and a scalable data warehouse to shape mastered data for analytics, reporting, and AI use cases.

How to choose the best data management solution for enterprises?

Architectural constraints, governance maturity, and internal capabilities tend to matter more than individual features. The aim is to create alignment across systems and departments, not to optimize a single tool.

The factors below reflect how enterprises typically frame decisions when reviewing top master data management solutions, tools, and related platforms.

- Data complexity. As data sources are often numerous and come from various operational systems and external sources, integration and identity management can become difficult. Simpler data environments often require fewer coordination layers.

- Regulatory and compliance requirements. Highly regulated industries require auditability, lineage, and access controls. Governance expectations often influence architecture as much as data volume.

- Scale and performance needs. As data volumes grow and more users rely on the platform, performance quickly becomes a real concern. What works for a small rollout often breaks down once adoption spreads across business units and regions.

- Integration with existing ecosystems. New solutions need to fit into the tools already in use, from cloud platforms to analytics and operational systems. When integration is weak, costs rise and adoption slows down.

- Long-term adaptability. Data needs rarely stay fixed for long. Business models shift, regulations change, and analytics requirements evolve, so platforms need to support gradual growth rather than lock enterprises into rigid designs.

These factors are interconnected and rarely evaluated in isolation. Trade-offs between control, flexibility, and operational effort are unavoidable. For this reason, enterprises often assess best-rated data quality management solutions as part of broader data architectures rather than standalone systems. The most sustainable decisions support long-term alignment across governance, integration, and business ownership.

Building Data Management That Works in Practice

In 2026, effective data management is shaped more by architecture and execution than by tools alone. Enterprises now run data across distributed systems, cloud platforms, and domain-owned environments, making coordination a central challenge. The top cloud data management solutions struggle to perform as intended without structural fit.

Integration logic, data quality rules, and scalable pipelines often need custom engineering to reflect how the business actually works. Organizations that see data management as something that evolves over time tend to adapt more easily as needs change. Taking a step back to talk through data challenges and architecture often helps surface where the biggest improvements can be made.

FAQs

What is the difference between data management and MDM?

Data management is a broad discipline that covers how data is collected, integrated, governed, stored, and used across an organization. MDM is a specific practice within data management that focuses on maintaining consistent and trusted records for core business entities such as customers, products, and suppliers.

Do all enterprises need MDM tools?

Not all enterprises need top MDM tools. Organizations with limited data complexity or a small number of systems may manage consistency through simpler integration and governance approaches. Enterprises with multiple data domains, duplicate records across systems, or shared data used by many departments are more likely to benefit from MDM.

How long does MDM implementation take?

MDM implementation timelines depend on scope, data complexity, and organizational readiness. Initial MDM implementations often take several months to set up core entities, governance rules, and system integrations. Broader adoption usually happens in phases as more domains, systems, and use cases are added.

Can MDM integrate with ERP, CRM, and cloud platforms?

MDM platforms are built to integrate with ERP systems, CRM tools, and cloud platforms. Integration typically uses APIs, data pipelines, or event-based workflows to exchange and synchronize data. The effort required depends on data models and real-time needs, but integration is a standard part of enterprise MDM environments.

Subscribe to blog updates

Get the best new articles in your inbox. Get the lastest content first.

Recent articles from our magazine

Contact Us

Find out how we can help extend your tech team for sustainable growth.