AI Agent Development Guide: Architecture, Integration, and ROI

This AI agent development guide offers a framework for those who want to understand how AI agents are built and deployed in real-world environments. It covers AI agent architecture, development process, and team composition behind agentic systems, along with common challenges such as security, reliability, and integration.

Contents

AI agents are intelligent systems that can reason, plan, and act autonomously toward defined goals. They dynamically interpret context, make decisions, and collaborate with other tools or humans to complete tasks. As enterprises look for adaptive and scalable solutions, agentic AI has become the next stage in intelligent automation that links static workflows and autonomous operations.

This AI agent development guide offers a framework for those who want to understand how AI agents are built and deployed in real-world environments. It covers AI agent architecture, development process, and team composition behind agentic systems, along with common challenges such as security, reliability, and integration.

🟢 Want to understand how agentic systems stack up against legacy automation? Explore our comparison of AI Agents vs. Traditional Automation Tools

Why is AI agent development a priority for tech leaders now?

Enterprises are moving from traditional automation and chatbots toward autonomous AI workflows capable of understanding context, making decisions, and acting independently. While earlier tools like RPA focused on repetitive, rule-based tasks, AI agents combine reasoning, adaptability, and learning. They enable organizations to manage complex and cross-functional operations. With this transition, systems can collaborate with humans, optimize processes in real time, and support long-term strategic goals.

AI agents bring efficiency and innovation, but they also raise important questions about governance, scalability, and ROI. To use them responsibly and make them part of long-term digital transformation, organizations need to understand these factors and plan for them from the start.

🟢 Webinar: Listen to fellow tech leaders discussing AI Agents in the Real World: From Prototypes to Production

Key Factors for Successful AI Agent Adoption

Governance: Balancing Autonomy and Oversight

Leaders need to set clear limits on what AI agents can do on their own, keep transparent records of their actions, and define who’s responsible for major decisions. Ethics, compliance, and risk management should always be part of AI agent software development. With a solid governance model in place, organizations can innovate safely while keeping their systems aligned with company policies and regulations.

Security: Protecting Autonomous Systems

AI agents interact with tools, data systems, and external services, which introduces new security considerations. Without proper controls, autonomous systems may expose sensitive data, trigger unintended actions, or become vulnerable to prompt injection and tool misuse.

In practice, security works best when it is considered from the beginning of architecture and development, becoming part of how systems are designed and operated rather than something added later for compliance.

Scalability: Moving from Prototype to Production

Scaling AI agents requires secure integrations with data systems, efficient infrastructure, and performance optimization across distributed environments. Tech leaders need architectures that support continuous learning, real-time monitoring, and flexible orchestration. Scalable AI agents not only automate tasks but also evolve alongside business needs. In this way, businesses create adaptive digital ecosystems that can expand without compromising reliability.

ROI: Turning Autonomy into Measurable Value

AI agent development is an investment in long-term efficiency and innovation. Measuring ROI means looking beyond cost savings to include improved decision speed, higher productivity, and new value creation. Well-designed agents reduce human workload, optimize resource use, and deliver data-driven insights that enhance competitiveness.

🟢 Explore the evolution from chatbots to agentic AI workflows in AI Chatbots for Business: The Essential Tool

Core Architecture of a Modern AI Agent: How to Build AI Agents

Modern AI agents are built on a modular architecture that lets them think, plan, remember, and act in changing environments. Instead of using one large, rigid system, they bring together several connected components — each handling a specific cognitive or functional task. This design makes AI agents more adaptable, scalable, and easier to maintain, which is why it works well for enterprise-level applications.

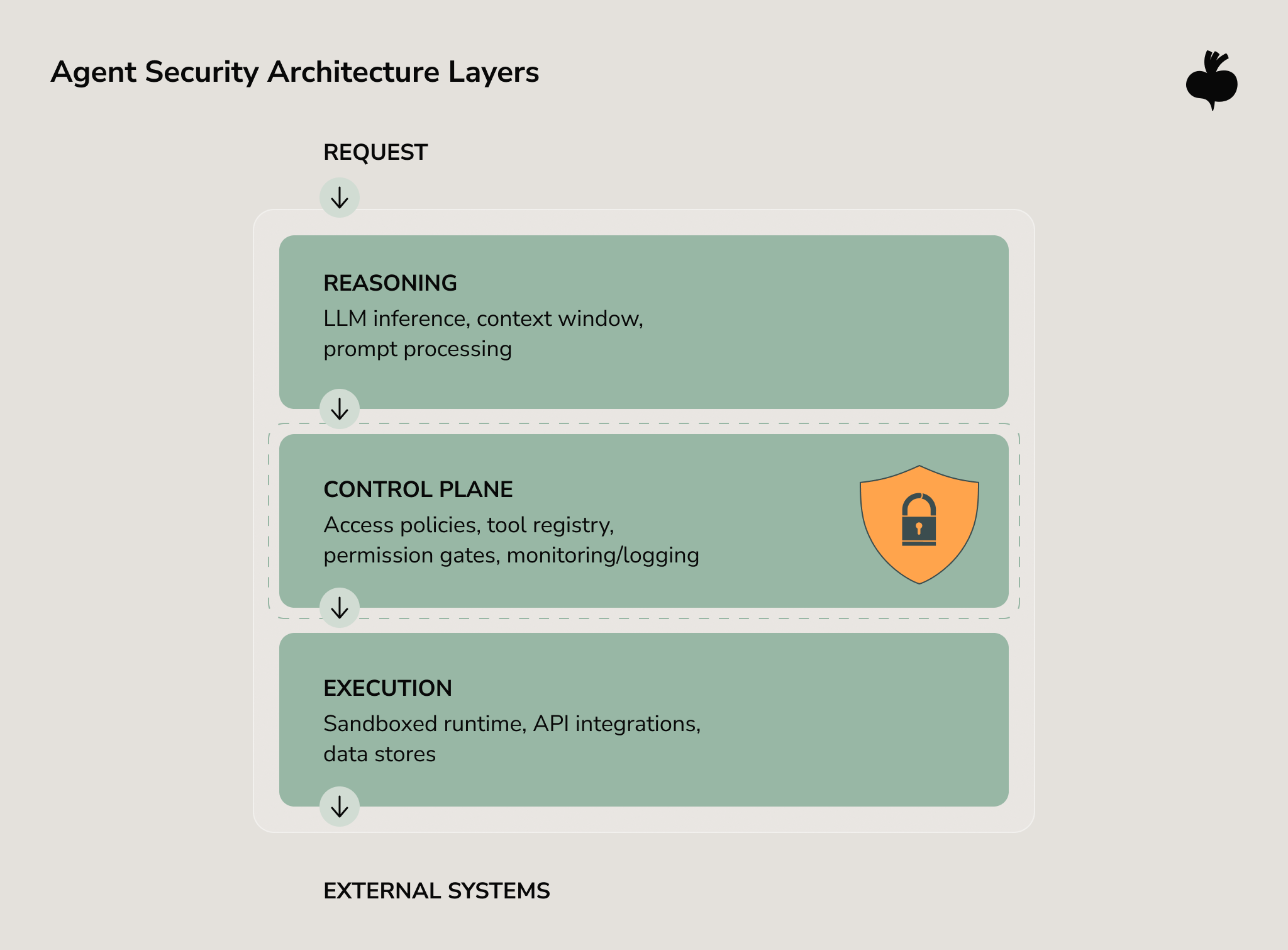

Security and Access Control Layer

Security-driven AI agents rely on clear boundaries between reasoning, control, and execution. The reasoning layer interprets goals and generates actions, but it should not directly interact with sensitive systems or infrastructure.

A control layer typically mediates these interactions by enforcing authentication, permission policies, and validation rules before any external action is executed. Through mechanisms such as scoped credentials, input validation, sandboxed execution environments, and runtime monitoring, organizations can limit the impact of unintended behavior or prompt manipulation.

Designing these control points early helps ensure that agents remain useful and autonomous while operating within clearly defined security boundaries.

🟢 Discover 6 must-have security controls for autonomous AI agents

Reasoning and Planning Layer

This layer takes a goal, breaks it into smaller steps, and works out how to get them done. The agent decides what to do first, makes choices based on logic or past experience, and changes its plan when new information appears. With reasoning frameworks, it can deal with unclear goals and keep working effectively, even as conditions change.

Communication and Language Understanding

At the heart of most modern AI agents is a large language model that allows them to communicate and reason naturally through text or speech. It can understand what users ask for, pick up on context, and respond clearly and naturally. It also translates between everyday language and technical commands so the agent can take meaningful action.

The language model works like a bridge between the agent’s thinking, memory, and tools. It processes information, decides what to do next, and helps the agent stay focused on its goals.

Tools and Integrations Layer

For AI agents to work effectively, they need to connect with external systems like APIs, databases, CRMs, or IoT devices. This is usually done through API connectors or SDKs for direct data exchange, middleware for handling multi-step workflows, and secure authentication systems that control access and permissions.

With the right integrations, AI agents can think and take action. They can schedule meetings, analyze data, or trigger workflows on their own, becoming active and useful parts of existing digital systems.

Adaptability and Observability: Glue of Effective Agents

When building AI agents, you need to consider two principles: adaptability and observability. Adaptability allows the agent to adjust its behavior when conditions or objectives change through continuous learning, feedback loops, and modular updates. Observability ensures transparency when every decision, request, and outcome can be monitored, logged, and audited.

Together, these principles enable safe, scalable deployment. Adaptable agents evolve with organizational needs, while observable systems give leaders confidence in performance, compliance, and governance.

🟢 Want to explore the inner workings of these components? See Under the Hood: How AI Agents Work in Enterprise Systems

AI Agent Development Process

The AI agent development process is iterative and blends technical innovation with careful oversight. It requires aligning business strategy, choosing the right technologies, and maintaining continuous feedback from design to deployment. Security, governance, and observability should be considered throughout the lifecycle so that the final system remains reliable, controllable, and aligned with enterprise goals.

Strategy & Use Case Definition

Every project should begin with a clear goal and a solid understanding of where AI agents can make a real difference. Companies need to identify the areas where automation adds the most value, set measurable success criteria, and ensure technical plans align with business priorities. This often means reviewing existing workflows, assessing data quality, and defining clear outcomes such as greater efficiency or smarter decision-making.

Organizations should also assess operational and security risks early, identifying which actions an agent can safely perform autonomously and where human oversight or stricter permissions are required.

Tech Stack Selection

The next step is choosing a technology stack that can grow and adapt as your AI agent evolves. This foundation usually includes large language models for reasoning and decision-making, vector databases for retrieving relevant context, and orchestration frameworks to manage multi-step tasks and workflows. Equally important are the integrations, APIs, and secure data pipelines that allow the agent to connect and communicate with other systems.

The architecture should also enforce separation between reasoning and execution layers, ensuring that models cannot directly access sensitive infrastructure without controlled interfaces and permission checks.

Prototyping & Iteration

Prototyping is the next step that includes building a minimal viable agent that performs core tasks and shows early value. At this stage, developers focus on experimentation, user testing, and continuous improvement. Feedback from real interactions helps refine reasoning patterns, memory handling, and decision logic.

Testing & Guardrails

Testing evaluates performance, reliability, safety, and security. Teams simulate edge cases such as prompt injection, invalid tool calls, and unusual context patterns to ensure the agent behaves within defined constraints.

Guardrails such as rule-based policies, access limits, anomaly detection, and human-in-the-loop oversight help maintain control over autonomous behavior.

Deployment & Observability

Deployment marks the start of ongoing responsibility rather than the end of development. Once live, the agent must be continuously monitored to ensure consistent performance and compliance. Observability tools provide real-time insights into decision flows, model outputs, and key performance metrics. Transparent monitoring means rapid issue resolution, model retraining, and long-term optimization. This phase ensures the agent remains accountable, explainable, and aligned with evolving business and governance standards.

🟢 Ensuring safety and accountability? Explore the role of human oversight in Human in the Loop Meets Agentic AI

Building Your Team: Essential Skills

It’s impossible to build AI agents without a combined effort of engineers, data specialists, and business stakeholders. Success depends on technical skills as well as strong communication, governance, and continuous alignment between innovation and oversight. The best-performing teams bring together expertise in AI development and shared understanding of organizational goals and compliance standards.

Core Roles in an AI Agent Development Team

- AI engineers. They design and implement the agent’s reasoning, planning, and interaction logic and ensure that it can process inputs, make decisions, and execute actions effectively.

- Machine learning experts / Data scientists. They train, fine-tune, and evaluate the underlying models; manage data collection, feature engineering, and performance optimization.

- MLOps engineers. They build deployment pipelines and monitor systems to maintain model reliability and scalability. This supports continuous learning and improvement in production.

- Software engineers / backend developers. They develop the integration framework and APIs that connect the agent to enterprise tools, databases, and external systems.

- Quality assurance (QA) engineers. They test the system for functionality, stability, and safety; validate the agent’s reasoning and responses across diverse scenarios.

- Product owners / project managers. They translate business goals into development priorities, manage timelines, and ensure that outcomes align with strategic objectives.

- Data engineers. They construct and maintain secure, high-quality data pipelines that feed training and inference processes.

- AI governance or compliance officers. They establish ethical, legal, and procedural frameworks that guide responsible AI use and regulatory adherence.

Building AI agents is not a linear process; it’s a continuous collaboration between technical and non-technical teams. Engineers, designers, and business leads must share a unified vision of how the agent fits within broader company operations.

🟢 Wondering how team composition affects delivery and cost? Check the AI Development Cost Guide 2026

AI Agent Development Best Practices Incorporated by Beetroot

Building and scaling AI agents takes a partner who understands both the tech and the bigger business picture. We see AI agent development as a collaborative journey where we build something together, not a one-size-fits-all service. Our teams work alongside yours to co-create solutions that fit your existing ecosystem, meet your performance goals, and evolve responsibly over time.

We provide flexible engagement models, from dedicated engineering teams to full-cycle development support. Our expertise spans agentic system design, orchestration, and integration, with a focus on sustainability and long-term value.

Our work combines technical expertise with domain understanding. Through services such as agentic AI solutions, MLOps, generative AI services, and AI chatbot development services, we support the entire AI lifecycle, from experimentation to production-grade deployment. Each engagement is built on transparency, knowledge sharing, and measurable impact.

Key Challenges in Building AI Agents and How to Overcome Them

Developing AI agents that can reason, act, and adapt autonomously requires more than strong model design. Tech leaders working with AI agent development platforms must navigate data volatility, complex system integrations, and ethical as well as operational risks that directly affect performance and trust. Below are five core challenges that often arise in enterprise-grade agentic AI projects.

Data Drift and Model Degradation

Over time, the data environment around an AI agent shifts because user behaviors change, business rules evolve, and new external factors appear. This data drift gradually erodes accuracy and makes them less relevant. If left unchecked, agents may start producing outdated or inconsistent results.

Teams should continuously monitor key metrics and identify data drift as soon as it appears. Automated retraining based on defined performance thresholds can restore accuracy before problems escalate.

Integration Complexity

Most enterprises use a mix of CRMs, ERPs, APIs, and legacy systems with different data formats and communication rules. Without a clear plan, integration can create bottlenecks, data mismatches, and unnecessary complexity, slowing down operations and increasing the risk of errors.

A modular, API-first architecture helps streamline the process. Middleware and orchestration layers can bridge systems and maintain data consistency and secure communication. Clear documentation, standardized authentication, and message formats reduce friction. Embracing cloud-native principles like microservices and event-driven workflows also makes scaling and maintenance easier.

Algorithmic Bias and Fairness

Models can unintentionally repeat or even amplify real-world inequalities when trained on biased data. This makes bias one of the toughest challenges in AI agent development. In autonomous systems, it can quickly build up, leading to unfair results and damaging user trust or brand reputation.

Reducing bias starts with using diverse, transparent datasets and keeping close oversight throughout development. Fairness-auditing tools can spot biased patterns, while human reviews at key points catch issues before release. Clear documentation and solid governance frameworks also strengthen accountability and trust.

Performance and Scalability

Models slow down and increase compute costs as usage grows. Their real-time performance can drop when handling complex reasoning or multi-step workflows without proper optimization. Developers use techniques like model compression and quantization to reduce computational load while maintaining accuracy. Organizations can build AI agents that stay fast, stable, and efficient even as demand increases with smart scaling and cost-aware design.

Trust, Transparency, and Interpretability

For AI agents to operate autonomously, stakeholders need to understand and trust their decisions. Yet complex models often act as black boxes, making it hard to explain actions or trace errors. This lack of clarity can slow adoption, raise compliance concerns, and complicate debugging in enterprise settings.

Explainable AI methods, detailed documentation, and audit trails help make an agent’s decision-making process transparent. Human oversight adds an extra layer of accountability for sensitive tasks, while governance frameworks set clear rules for when agents are allowed to act on their own.

| Challenge | Description | Solution |

| Data Drift and Model Degradation | Model accuracy declines as real-world data and user behavior change. | Continuously monitor performance, retrain models when drift is detected, and maintain version control for adaptive accuracy. |

| Integration Complexity | Linking AI agents with CRMs, ERPs, and legacy systems can cause data and workflow issues. | Use API-first, modular architecture with middleware for interoperability and scalable cloud-based integration. |

| Algorithmic Bias and Fairness | Unbalanced data or flawed logic leads to unfair or unreliable outcomes. | Diversify datasets, run bias audits, involve human oversight, and retrain regularly to uphold fairness. |

| Performance and Scalability | High compute demands and latency limit real-time responsiveness. | Optimize models, apply distributed inference, and use autoscaling to balance speed, cost, and reliability. |

| Trust and Interpretability | Opaque decisions reduce user confidence and hinder compliance. | Apply explainable AI, log decision rationales, and set governance rules for transparent, accountable behavior. |

🟢 Discover how similar challenges are tackled in sustainability-driven AI initiatives — see AI Chatbots and Virtual Assistants in Green Tech

Shaping the Future of Intelligent Workflows

AI agents represent more than a technological shift, they signal a new era of collaboration between humans and intelligent systems. From strategy and architecture to testing and governance, building them requires thoughtful design, iteration, and AI agent development best practices.

At Beetroot, we help organizations turn agentic concepts into reliable, production-ready solutions that deliver long-term value. Whether you’re exploring use cases or scaling an existing prototype, our team can consult and help you navigate different stages of development.

FAQ

How do you measure ROI from agentic AI?

Measuring ROI from agentic AI means looking at both numbers and real-world impact. On the quantitative side, it’s about things like faster processing, lower costs, higher output, and more accurate workflows. On the qualitative side, it’s about improvements that are harder to measure but just as important, faster decision-making, more productive teams, and happier customers.

When you look at both, you get a clearer view of how AI agents drive efficiency and overall performance. Tracking short-term results alongside long-term business outcomes gives the most complete picture of how agentic AI contributes to growth and lasting value.

How do you prevent critical agentic errors?

Avoiding serious mistakes in AI agents requires a thoughtful, layered approach to safety. It begins with clear rules, ongoing testing, and human oversight to keep decision-making on the right track. Each possible action should be tested in both simulated and real-world situations to uncover issues before they cause real problems.

As the agent continues to learn and adapt, it also needs firm guardrails that keep it from going off course or making risky decisions. These safety measures help balance autonomy with control, allowing the agent to improve over time without losing stability or reliability. When testing and oversight work together, teams can build AI systems that are both capable and trustworthy.

How long does it take to build an AI agent prototype?

Building an AI agent prototype usually takes between 4 and 8 weeks, depending on the project’s complexity, data readiness, and integration scope. The AI agent development process starts with defining clear objectives, designing the agent’s reasoning and interaction capabilities, and preparing the data pipeline.

Developers then select appropriate models or frameworks, build the first working version, and conduct iterative testing. For more complex use cases, such as multimodal reasoning, advanced NLP, or integration with enterprise systems the timeline may extend to several months. Proper planning, data preparation, and continuous feedback cycles are crucial to achieving a reliable, functional AI agent prototype within a predictable timeframe.

What’s the biggest challenge in AI agent development?

The biggest challenge in AI agent development is finding the right balance between freedom and control. Developers need to build systems that can think and act on their own while still staying safe, reliable, and easy to understand. Avoiding mistakes, false outputs, or ethical problems takes clear rules, human supervision, and careful checks on the data being used.

Maintaining long-term performance as the agent encounters real-world variability also presents difficulties. Context retention across sessions, managing ambiguous user inputs, and ensuring fairness across diverse datasets add complexity. Ultimately, the most demanding part is designing agents that can act flexibly and intelligently within human-defined boundaries, adapting to new information while remaining predictable and compliant with organizational standards.